Just to get the ball rolling..

One project I have been working on, is trying to get an OV7670 camera working with a STM32 board

I’ve had the OV7670 camera board for at least a year, but I found that it was almost unusable on the Uno or Mega Arduino, because the processor speed (16Mhz) is too low on those AVR boards and they don’t have enough RAM to hold a useful image

The other problem with using an AVR Arduino with those camera modules, is that the Non-FIFO module requires an external clock of at least 10Mhz (I think some people manage to get them to work at 8MHz, but I think this is lower than the spec allows)

I know that it is possible to get the AVR’s to output a clock, but one method requires special fuses to be blown in the chip to facilitate this, so its hard to just use the Arduino IDE to do this

The STM32 boards look a much better option for connection to the camera, because the bigger processors have up to 64k RAM, which is plenty to store a reasonable resolution frame, they can also generate the 10Mhz (or higher clock) just using PWM, and of course the processor speed of 72Mhz on all the STM32F103 also allow them to read and process the image data more easily that is possible on a 16Mhz AVR board.

Anyway, so much for the theory.

So far, I have managed to generate the clock, and also communicate with the camera via its SCCB bus (a derivation of I2C), however I have had less luck reading an image.

I will post code and an update on this when I have more time.

OV7670 I2C is non standard, it wont run at the speed of the I2C that the STM32 uses (250kbps)

So I had to slow it down.

I found the code I was messing around with, and it does communicate via Wire aka SCCB to the camera, but I’ve no idea what setup is being sent to the camera, as I just took some example code, and the camera has hundreds of registers that need to be setup correctly for it to work well.

Also the pixel grabbing code is too slow, even for the STM32 running at 72Mhz, having to monitor the HREF and VSYNC pins as well as waiting for the rising edge of the pixel clock doesnt really work.

In the code I posted, it doesnt even grab the pixel, if you see on line 392 its just incrementing the pixel counter, but this is where you need to put the code to read the pixel.

i.e I never get a consistent pixel count.

I think what MrArduino does its not to bother checking HREF, all he does is wait for VSYNC then count the number of rising edges on the pixel clock (as just looking for rising edges and incrementing or decrementing a counter is possible even on a 16Mhz AVR board.

Really what we need to do is use a Timer input and the DMA to get the data every rising edge of the pixel clock, but I’ve not had time to work out how to setup both a Timer channel and also a DMA channel to do this.

I know Victor_pv is busy with other DMA and Timer related stuff, but when he’s finished with that, he may be able have some ideas on how to setup the code to do the pixel grabbing in DMA,

BTW. To grab the pixels effeciently, you need all 8 bits of the pixel on the same port e.g. PA or PB or PC etc

So I ended up using a STM32F103RCT board, as this has 8 GPIO’s on one of the ports

Anyway,

At least you may be able to communiucate over SCCB with your camera

PS. It won’t communicate over SCCB unless you supply it with the 10Mhz clock

My code outputs the clock, but I’ve not looked it it for months, so I can’t remember which pin its on.

So you’ll need to read the code to work it out

this would be one of my future projects. I had one Pro Mini working with the camera (w/o FIFO), I could really read pixels showing a real picture!

In that setup I just pushed the pixel data over UART->WiFi bridge to a PHP script (Apache server) which than processed further the image.

Unfortunately, the data link was not always reliable, and the speed awfully slow.

This time I will try to read only a subset of the whole image so that it fits in RAM. Alternatively, I could also use my external 64Mbit SRAM over SPI to temporarily store image data.

Btw, is there any external SRAM lib available for STM32? Maybe even with DMA transfer?

I will test you code and let you know if I can get some usable results.

Cheers,

Steve

I finally managed to get an image from OV7670 camera chip.

I used the SW from Roger as starting point. However, the camera settings won’t do the right thing.

I had another project based on ATmega328P pro mini which worked, and I imported those settings.

And also reworked the I2C part which didn’t work at the beginning (i don’t know exactly why…).

My requirements were:

– use only gray scale 8 bit

– use lowest resolution to fit data into RAM

– use maximum pixel clock speed which still enables SW polling of PCLK.

So I came up to this attached version. The pinning is documented in the INO file.

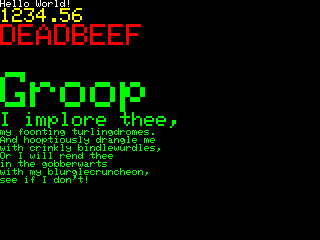

The image data is sent out to serial port. To visualize it, first copied the serial output to Notepad++, then used the plugin converter HEX->ASCII. Saved the data as img.raw.

Then I used ImageJ (http://imagej.nih.gov/ij/download/win32 … re8-64.zip), File->Import->RAW, 80(lines)x160(pixels), 8 bit.

I also attached a recorded picture in the package for reference.

Have fun!

ps. next step will be to OCR the picture on STM32 ![]()

I know the getting the settings right seems to need some sort of Voodoo magic ![]()

( because there are so many of them)

I’m sure that it would be possible for the STM32 to DMA the image from the camera, but I never got around to figuring out how to setup all the regsiters that would be needed do that

But its good you have got something working.

The image seems to be 160×80, not 80×160. My brain OCR output is 3487!

Maybe you can drop a passage outputting binary on serial instead of ASCII hex.

@zoomx – the used camera setting allows 160 (pixels) x 120 (lines), out of which I only use the first 80 lines, the resulting image geometry fits to my need.

@Roger – I also made thoughts about to use DMA, but as long as don’t need high frame rate (only one steady picture), I finally implemented the simplest solution.

Anyway, to use DMA doesn’t seem to be much complicated (theoretically), the trick is to set up a timer in slave trigger mode (i think) to generate the interrupt for DMA as PCLK rising edges are detected. For this, the camera should be set to generate PCLK only when HREF is active (I have the respective line commented out in the INO file).

The relevant command is:

//wrReg(REG_COM10, BIT5); //pclk does not toggle on HBLANK

commented out in my INo file.

Thanks. Thats good to know

I have been trying to get this functional on the Arduino (but not yet).

I would be glad to try this on my Stm32 ebay boards.

Below is the link:

https://forum.arduino.cc/index.php?topic=159557.720

The code should be easy to convert from Arduino to Stm32.

Below is the Arduino Code and Schematic, without a needed Level Converter for PCLK.

I not sure what was your question.

Have you read the previous posts?

This gives you more info, including code:

http://www.stm32duino.com/viewtopic.php?f=19&t=4#p11278

– for anybody interested.

For example, the Stm32 example code listed here only provides the 160×80 resolution,

– while the Arduino code provides Vga 640/320×240 Modes – which may be helpful modifications.

I just posted this – and have not dug up my Stm32 boards to try it yet.

I also have some 320×240 ili9341 LCDs that I can use with it.

– Just Ov7670 connected to Stm32F103c8 ebay board.

https://www.youtube.com/watch?v=TqSY6FETuos

He made a huge thing 10fps on Arduino.

I will be trying to run it on maple mini.

He has all code on github also for stm!

worth to try!

https://www.youtube.com/watch?v=TqSY6FETuos

He made a huge thing 10fps on Arduino.

I will be trying to run it on maple mini.

He has all code on github also for stm!

worth to try!

If I get chance I will try it as well.

Using a ST7735 based display.

This one is Arduino nano based.

https://github.com/indrekluuk/LiveOV7670

I have one with and one without FIFO.

I’m not sure which version these repo’s use, but I can find out.

From what I recall the FIFO doesnt help a lot in the image capture as you still seem to need to read the data at a very high rate, however I presume to send a frame over a slow link e.g. USB serial, it may be possible to capture one frame to FIFO and then read that frame multiple times, offsetting by one additional line each time the FIFO is read (and only reading one line)

But I also can’t remember if the FIFO holds a whole frame or list 1 line, but its a large buffer (384k)

http://www.averlogic.com/pdf/AL422B_Flyer.pdf

So I presume its a whole frame.

// configure PA8 to output PLL/2 clock

#ifndef OV7670_INIT_CLOCK_OUT

#define OV7670_INIT_CLOCK_OUT \

gpio_set_mode(GPIOA, 8, GPIO_AF_OUTPUT_PP); \

*(volatile uint8_t *)(0x40021007) = 0x7

#endif// configure PA8 to output PLL/2 clock

#ifndef OV7670_INIT_CLOCK_OUT

#define OV7670_INIT_CLOCK_OUT \

gpio_set_mode(GPIOA, 8, GPIO_AF_OUTPUT_PP); \

*(volatile uint8_t *)(0x40021007) = 0x7

#endif#ifdef _VARIANT_ARDUINO_STM32_#ifdef _VARIANT_ARDUINO_STM32_if the std 11 was missing , it woul not compile.

And perhaps without the -O2 it runs too slow.

BTW.

I see a lot of activity about using DMA to write data to SPI etc, but DMA can also read and write to GPIO.

So it may be possible to use DMA to read the pixels, without needing the code with all the AMS NOPs

So it may be possible to use DMA to read the pixels, without needing the code with all the AMS NOPs

So it may be possible to use DMA to read the pixels, without needing the code with all the AMS NOPs

[konczakp – Sat Jun 17, 2017 7:55 am] –

To get this compiled You have to add to compiler.cpp.flags : -std=gnu++11 -O2

Can you remember how you wired it.

I see in the github repo that it says.. the camera connections are

A8 – XCLCK (camera clock)

PB3 – HREF (Connecting this is not mandatory. Code is not using it)

PB4 – PCLCK (pixel clock)

PB5 – VSYNC (vertical sync)

PB6 – i2c Clock (10K resistor to 3.3V)

PB7 – i2c data (10K resistor to 3.3V)

PB8..PB15 – D0..D7 (pixel byte)

(I don’t have that display, so I’ll need to do something else e.g. display to ILI9341 somehow)

Did you follow that wiring exactly ?

Looking at the last time I tried this. I had wired the two IC2 connections to 3.3V via 4.7K but perhaps that is too much pullup.

TFT : STM32

GND : GND

VCC : +5v

Reset : PA1

A0 : PA3

SDA : PA7

SCL : PA5

CS : PA2

LED + : 3.3v

LED - : GND

CAMERA : STM32

reset : +3.3v

pwdn : GND

D0 : PB8

D1 : PB9

D2 : PB10

D3 : PB11

D4 : PB12

D5 : PB13

D6 : PB14

D7 : PB15

xclk : PA8

pclk : PB4

href : not connected

vsync : PB5

sido : PB7 -> 10K resistor to 3.3V

sidc : PB6 -> 10K resistor to 3.3V

gnd : gnd

vcc : +3.3v

I didnt manage to have time to wire it yesterday as the forum BBS took far longer than anticipated to upgrade.

I will try to wire it to a blue pill today

(+∞ if you make it very simple to use

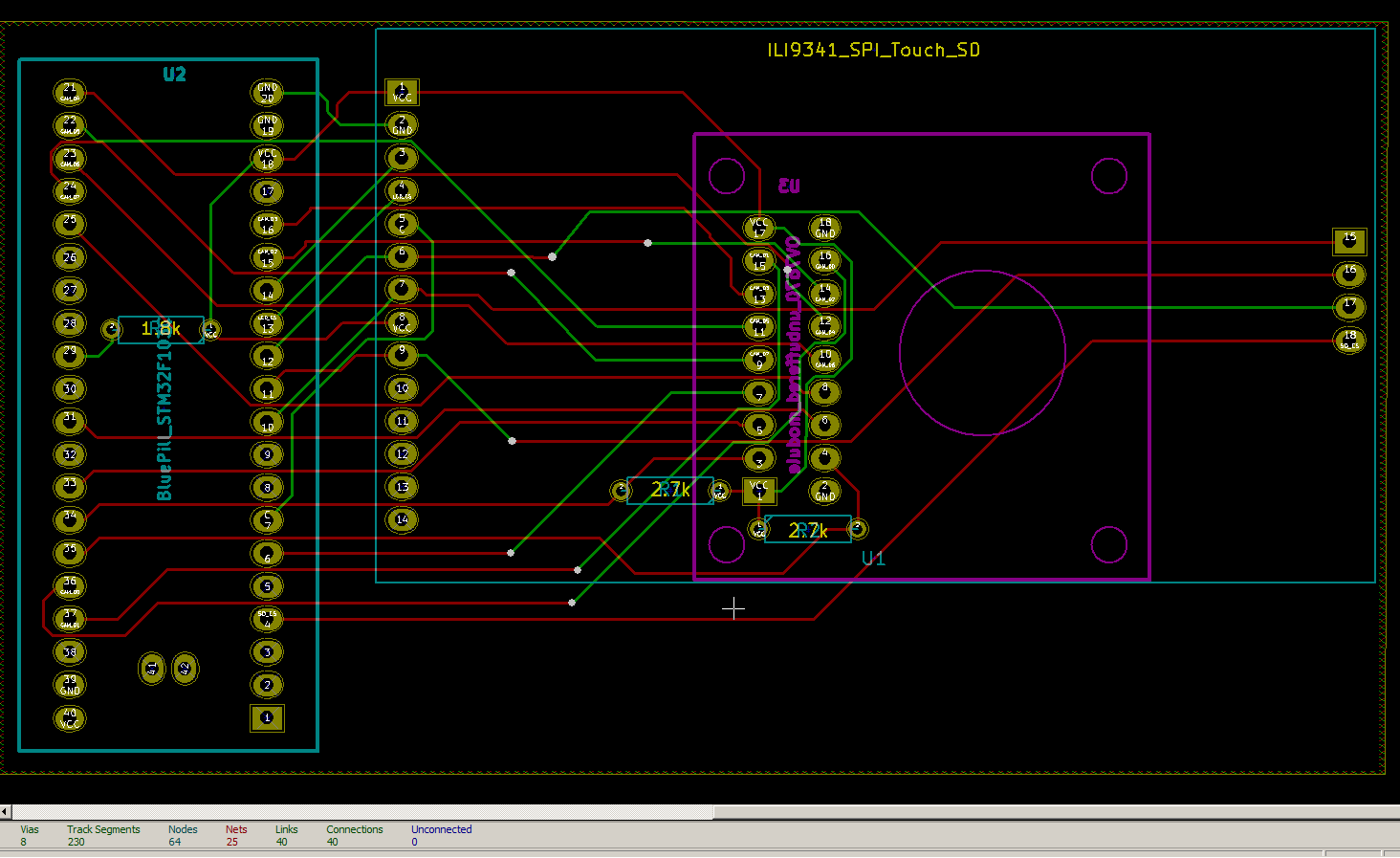

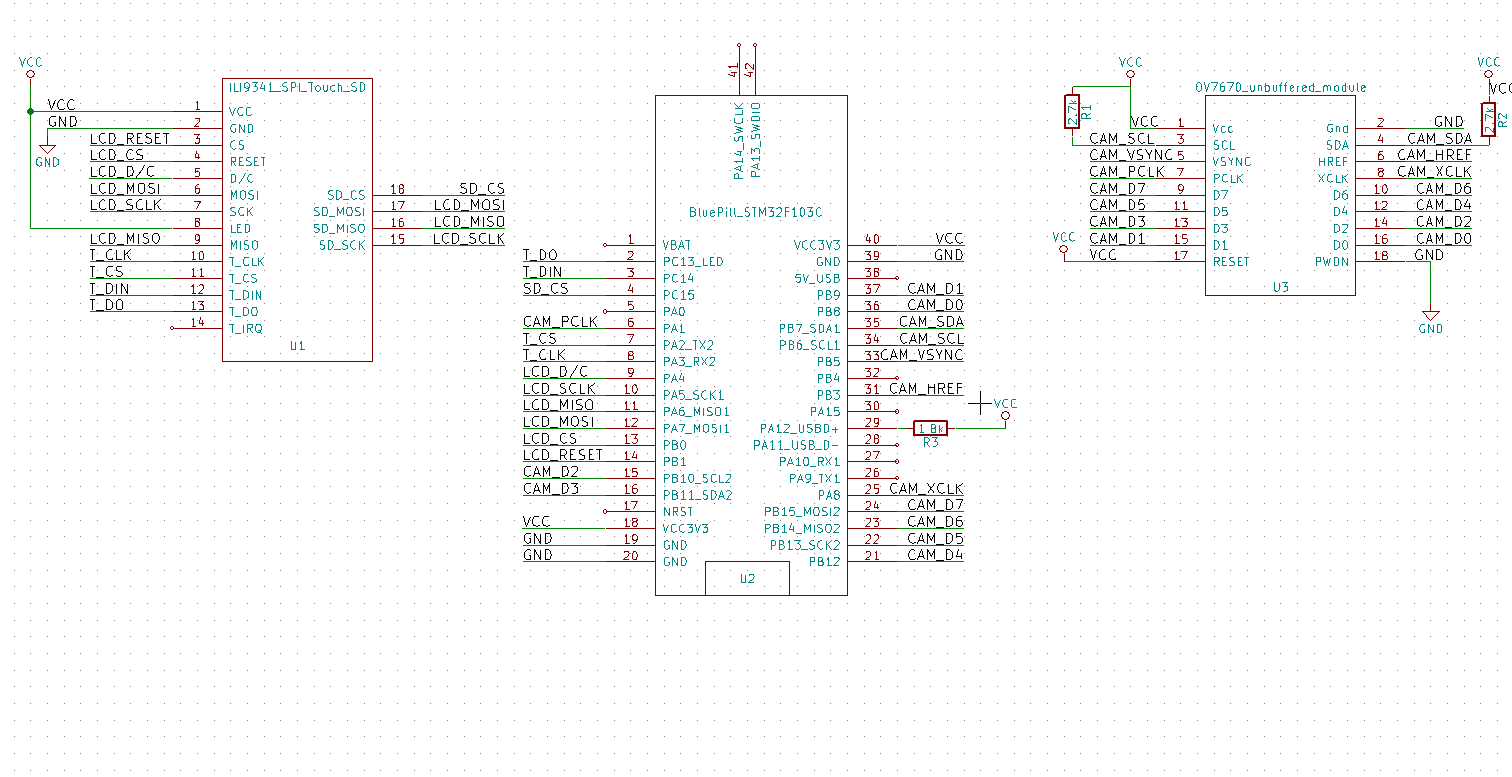

I’m also thinking of generally making a PCB which is has connections for Blue Pill and touch screen ILI9341 display + SD

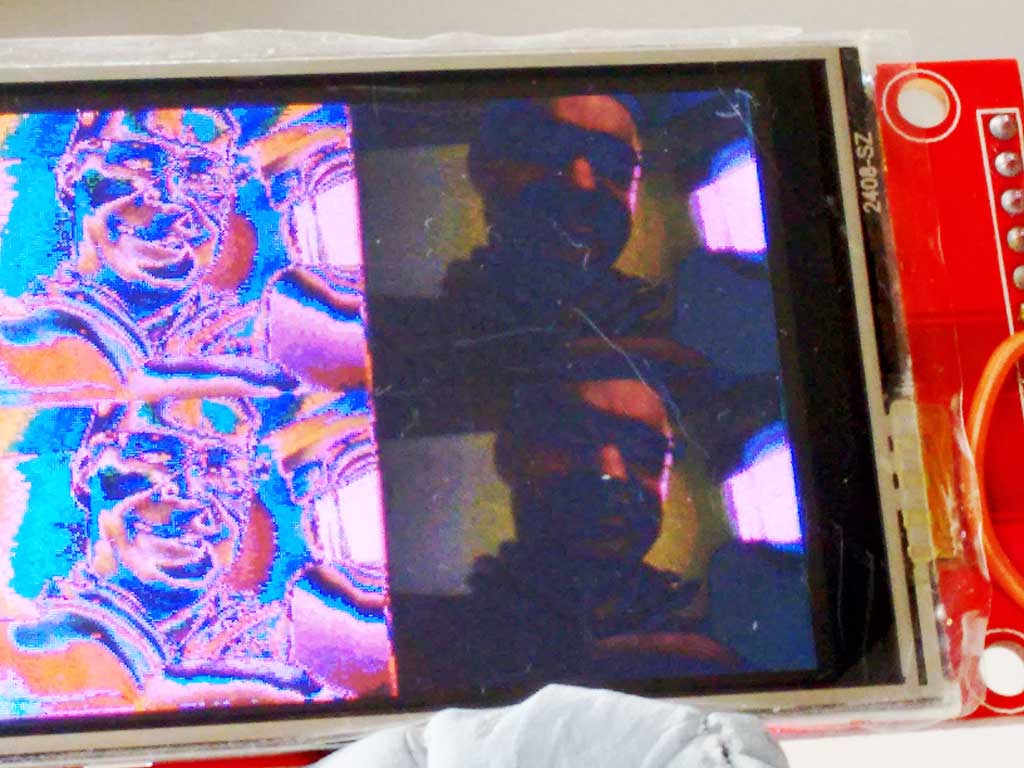

I wired up my unbuffered OV7670 to a Blue Pill (lots of wires) and also connected an ILI9341 (even more wires)

I have compiled the LiveOV7670stm32 sources, without changing the optimisation settings (yet), and changed the code so that I’m using the Adafruit ILI9341 lib instead of the ST7735 library

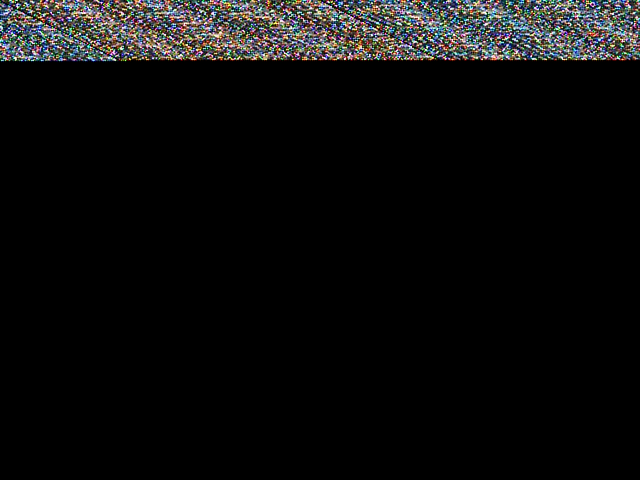

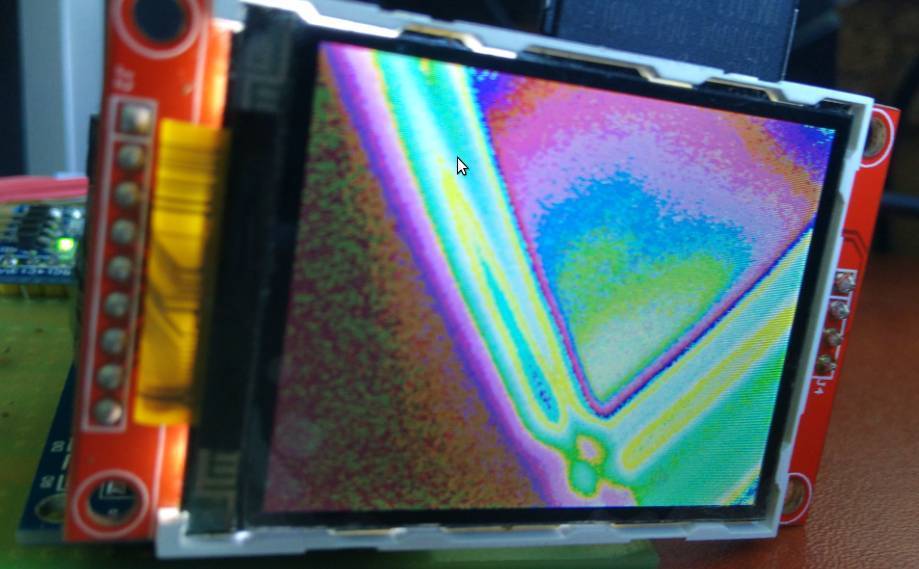

I’ve also started to change the code so that the scan lines are sent to the ILI9341 in a different way to the ST7735, and I’m starting to see what look like images on the display, albeit the scan line length does not match the ILI9341 and I’m not setting the line position, and also I’m only sending the raw 8 bit data from the camera, when I think the display expects 16 bits per pixel hence the image of 2 lights in my ceiling looks like this ![]()

- ov7670_ili9341.jpg (66.14 KiB) Viewed 769 times

The display code seems to have NOPs in it, which I find really strange.

And when I try to just use SPI.dmaSend() to put the line buffer from the camera to the LCD, adding code to the function before it calls dmaSend() causes the image to get displayed differently

This is possibly why the compile option -O2 may be needed, but probably doesnt help me to get it working with the ILI9341

As the camera is set to run as QQVGA, and the display is QVGA, I tried changing the camera code to do QVGA 320×240, by duplicating the BufferedCameraOV7670_QQVGA class to make BufferedCameraOV7670_QVGA (and changed the values)

But if I try to send the pixel buffer to the display it seem to crash the code.

Looking at the maths of the data rates. The code is reading in data at 8 M bytes per sec (from the 8Mhz pixel clock as its 8 bits wide)

But it can only get sent to the display at 36Mhz, plus the overhead of the SPI setup.

So the only way I can see this working is using different lines from each frame.

i.e camera frame 1 use line 1 and send to the display, then wait for the next camera frame, and read only line 2 and send that to the display

But this results in a really low frame rate.

So perhaps some more complex scheme would work, e.g. read every 4th camera line and send it to the display and then offset by 1 line each frame % 4

It was used with retrobsd as swap and ramdisk. The max rd/wr speeds around 10MB/sec. Maybe useful as a frame buffer??

I also have the type of OV7670 which has the FIFO buffer chip on the back. Averlogic AL422B-PBF

http://www.frc.ri.cmu.edu/projects/buzz … Sheets.pdf

As far as I can tell, it should be possible to write one frame from the camera, at 8Mhz, but then switch the clock down to 1Mhz to read the data from the FIFO

Or if that does not work, then just read the frame out line by line, i.e 1 line per frame, though this would be a lot slower

But I hoped to be able to get a full QVGA 320x 240 image onto the ILI9341

However at the moment the colours are all wrong, and I don’t know if the camera is being initialised correctly or not.

The code seems to init the camera and report that its OK, its possible that its not really initialised completely correctly

I think the only way I can test this correctly is to order a ST7735 module from eBay

perhaps this module

http://www.ebay.com.au/itm/1-8-inch-1-8 … 2456943464

And then compile and run the code using this display and confirm its all working OK.

And then try to get the ILI9341 display working

PS. Debugging is a pain. it calls “no interrupts” so USB stops. And also debugging realtime stuff like this is tricky if it relies on bizarre timings.

I will probably have to debug by sending data to Serial port

I may take a look at using daniel’s STM32Generic and the STM DMA library, to perhaps try DMA’ing the GPIO into RAM rather than using the code full of NOPs

[konczakp – Wed Jun 21, 2017 11:51 am] –

That’s right, they differ but it is easy to change in the code how the screen is being initialized. There is something like init screen with blacktab greentab etc.

Ok.

I ordered two of those displays for testing

BTW. If you compile without the -O2 option, can you tell me if it still does not work if you using the latest version of the repo ?

At the moment I get completely wrong colours, which can be associated with the camera not being setup correctly, but could be a by-product of the data not being clocked in correctly from the camera because of timing issues

void initLiveOV7670() {

bool cameraInitialized = camera.init();

tft.initR(INITR_BLACKTAB);

if (cameraInitialized) {

tft.fillScreen(ST7735_BLACK);

} else {

tft.fillScreen(ST7735_RED);

delay(3000);

}

I know why I get 4 images on the screen. Thats because the camera is recording at QQVGA and the screen is QVGA

I tried changing the code to get the camera to record as QVGA (320×240) but when I try to send that data to the ILI9341 it crashes and I’m not sure why

I’ll try recompiling with the -O2 optimisation.

I’ll also need to look at the code to understand how its supposed to work, as the function to write the pixels to the screen is very very slow and I don’t understand why there are NOPs in it

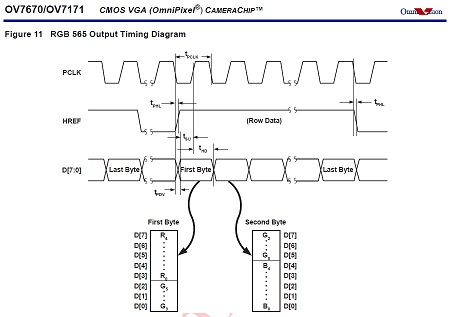

Pixels from the camera are sent in two byte RGB565 format.

first byte:

R - PB15

R - PB14

R - PB13

R - PB12

R - PB11

G - PB10

G - PB09

G - PB08

second byte:

G - PB15

G - PB14

G - PB13

B - PB12

B - PB11

B - PB10

B - PB09

B - PB08

https://cdn-shop.adafruit.com/datasheets/ILI9341.pdf

I think the data format is on page 45

I thought its was 565 like the camera but I’ll need to double check

If you store it as an uint16_t, and write it to the display in little endian, then the colors will be of course different.

[stevestrong – Thu Jun 22, 2017 11:45 am] –

It seems to be 565, but read out in big endian format (high byte first).

If you store it as an uint16_t, and write it to the display in little endian, then the colors will be of course different.

I actually tried building a buffer and byte swapping, but it seemed to make things worse.

The ILI9341 also seems to have an ENDIAN selection, (page 192 of the doc)

So it may be possible to swap it so that its the same as the camera

And if that doesnt fix the colours, I will wait for the 7735 display to arrive, because it will be a lot easier to get it working with the ILI9341, when I know for sure the camera is outputting RGB .

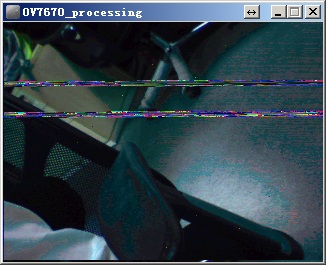

Also, if I byteswap I do get better colours, but it looks like there is something strange going on with either the image capture or sending the data to the display.

Because the byte order seems to be swapping alternately between lines

- ov7670_byteswap.jpg (91.22 KiB) Viewed 335 times

I change my code, so that I byte swap and duplicate the pixels down the line, and also send the same line to the display twice

uint8_t dispBuf[320*2];

uint8_t b1;

uint8_t *tdBufEnd = dispBuf + 320*2;

void sendLineToDisplay()

{

// if (screenLineIndex-- > 0)

{

uint8_t *cBufPtr = (uint8_t *)camera.getPixelBuffer();

uint8_t *tdBuf = dispBuf;

while(tdBuf < tdBufEnd)

{

b1=*cBufPtr++;

*tdBuf++=*cBufPtr;

*tdBuf++=b1;

*tdBuf++=*cBufPtr++;

*tdBuf++=b1;

}

SPI.dmaSendAsync((uint8_t *)dispBuf,camera.getPixelBufferLength());

SPI.dmaSendAsync((uint8_t *)dispBuf,camera.getPixelBufferLength());

}

}

[konczakp – Sat Jun 24, 2017 6:53 am] –

With this artifacts (violet or whatever and strange colors) problem I think You could solve that with setting in the main file in setup all data pins to Input mode. It seems that one data pin is not functioning as it should. Try it.

OK

I could have a dry joint , bad connection

I’ll write some test code to read the PB IDR and see if one of the bits is always zero

[stevestrong – Sat Jun 24, 2017 7:07 am] –

Having the need to change the byte swap would mean that you are actually loosing a byte (half pixel) from one line to the next, which you recover somehow at the next line.

I should not be loosing half a pixel

b1=*cBufPtr++;

*tdBuf++=*cBufPtr++;

*tdBuf++=b1;

However, I think @konczakp may be correct, and that perhaps 1 data it is not connected.

I commented out the line that disabled the interrupts, and used Serial.print to log some data from the camera, and I think bit 3 is always low

e.g.

some data

FA

FB

FA

FB

FB

FB

FB

FB

FB

FB

FB

EB

F9

91

32

D3

52

BA

8B

99

48

91

3

78

83

70

22

78

23

70

3

6B

E2

6B

C1

6B

82

63

82

6B

42

63

42

5B

22

63

22

63

42

6B

63

73

62

73

62

6B

82

73

A2

73

E2

7B

E2

7B

C1

6B

60

6B

41

6B

61

80

29

80

49

78

8

80

28

7B

E9

63

62

6B

29

7B

AA

7B

C9

7B

EA

83

EA

7B

A9

52

C1

42

23

0

C2

10

A2

10

82

10

82

10

82

10

82

10

82

10

82

10

82

8

82

8

82

10

82

10

A2

10

A3

10

A3

10

83

10

83

8

62

8

62

8

82

8

82

10

82

10

82

8

83

8

83

8

82

8

82

8

82

8

82

8

83

8

83

8

82

8

82

8

82

8

82

8

82

8

82

8

82

8

62

8

62

8

62

8

62

8

62

8

62

8

62

8

62

10

C3

11

1

10

E0

8

C0

0

FA

FB

FA

FB

FB

FB

FB

FB

FB

FB

FB

D2

F9

A9

B0

99

CB

99

AA

91

4A

91

4A

89

4A

89

4A

89

9

89

9

88

E9

88

E9

80

C9

80

C9

80

A9

80

A9

88

A9

80

A9

80

A8

80

A8

80

89

88

89

88

89

80

88

80

68

80

43

80

48

80

28

80

43

80

48

88

A9

90

C9

78

8

80

28

78

A

6B

63

63

3

7B

A9

73

C9

7B

CA

7B

C9

73

A9

52

C1

42

23

0

8

82

8

82

8

62

8

62

8

62

8

62

8

62

8

62

8

62

8

62

8

62

8

srp

Anyway

PB10 does not seem to default to input (not sure why)

So I was loosing 1 bit of input data as it was always zero

I added some extra (missing) init code

const int inputPins[]={PB9,PB9,PB10,PB11,PB12,PB13,PB14,PB15};

for(int i=0;i<8;i++)

{

pinMode(inputPins,INPUT);

}

Still a bit washed out but its working a lot better

May be even better in daylight, so I’ll make another video tomorrow

@konczakp thanks again

I should probably do a PR to the authors github account or at least do an issue with this fix !

[Pito – Sat Jun 24, 2017 7:19 pm] –

PB10 on BP issue – http://www.stm32duino.com/viewtopic.php … =30#p30136

Thanks

I vaguely recalled reading that.

I’m sure it can be fixed with some #ifdef statements

[RogerClark – Sat Jun 24, 2017 11:04 pm] –[Pito – Sat Jun 24, 2017 7:19 pm] –

PB10 on BP issue – http://www.stm32duino.com/viewtopic.php … =30#p30136Looks like the best solution is to define

#define BOARD_USB_DISC_DEV NULL

#define BOARD_USB_DISC_BIT NULL

[RogerClark – Sun Jun 25, 2017 3:03 am] –

I”ve pushed a commit to fix this for the BP and a few other boards; however some boards seem to have other pins defined as the Disconnect pin, (not just the maple mini) so I’ve left those untouched in case people are using boards with disconnect hardware

Would this affect in any way the USB re-enumeration after reset on non-MM boards?

As far as I can see this pin is exactly what is supposed to do, and if you set to NULL it will not be done anymore.

But I am most probably wrong.

So for boards without extra usb hardware, this pin should not be set to OUTPUT during the USB enable function.

But I think perhaps on boards like the BP, so other code may be needed in USB enable() , but the code was put into USB serial by mistake, because at the time the only type of USB interface supported was USB serial

Please explain me what is going on there, I simply don’t understand it.

First of all, does the CPU use SYSCLK?

Does the CPU run with 72MHz?

If i check the RM0008 figure 8 Clock tree, I see the source for SYSCLK can be HSI, PLLCLK or HSE.

HSI = 8MHz.

HSE = 8MHz (on BP).

PLLCLK = ???

Is the source of SYSCLK set to PLLCLK?

Does this mean that PLLCLK is also 72MHz?

OTOH, MCO is outputting PLLCLK/2 which is in any case half the CPU clock, right?

Does this mean, MCO is outputting 36MHz? Pixel clock = 36MHz?

Anyway, if the CPU clock is only twice the pixel clock, then I don understand those lots of NOPs. They most probably serve a precise timing for sampling the camera data, but it seems that the CPU should sample at each second clock a byte, and not wait with several NOPs.

On the other side, if MCO = 8MHz, this means SYSCLK = 16MHz, SPI clock = 8MHz…

Where am I wrong?

EDIT

I think I got it.

I mixed up XCLK with PCLK.

So it seems that XCLK = 36MHz (MCO), but PCLK = 8MHz (or eventually 9MHz), right?

Did you measure it with the scope?

Anyway, we need those NOPs for an optimum reading of the pixel data at mid of the PCLK high period.

But if there is sooooo much time to wait, why not reading the data and outputting to the display right away?

Re: Reading and writing at the same time.

It would be more efficient to byte swap as it reads the pixels, and also to pixel duplicate, and perhaps even to duplicate the line into a buffer.

This would leave more time for done other processing, but on a F103 or most STM32’s its not going to be possible to do much processing apart from perhaps colour correction, because normally to do things like sharpening etc you need a video frame buffer so that adjacent pixels in X and Y directions can be accessed.

I don’t know what applications there are for just streaming the video to the display, apart from using that as a preview before capturing one frame as a still image.

I suppose it could be used for motion detection by some sort of checksum for each frame (it would be a really bit number), possibly beyond 32 bits.

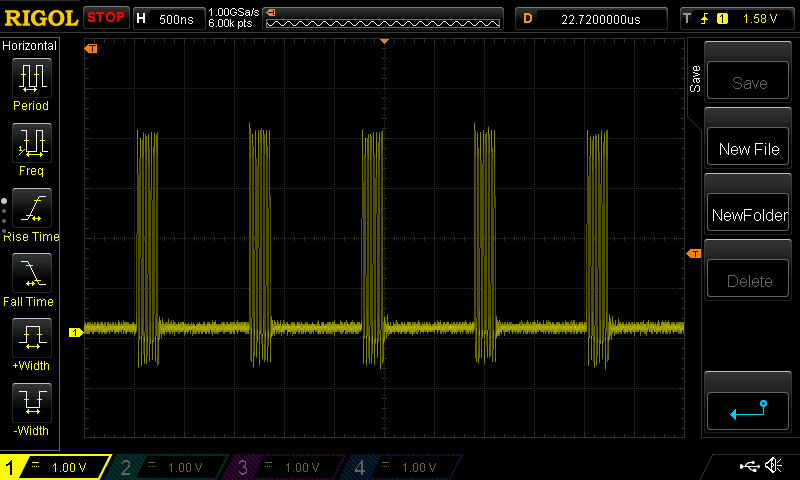

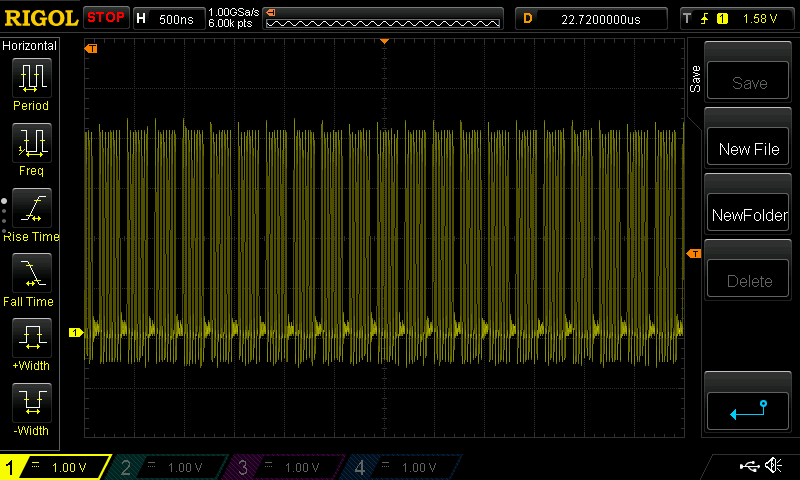

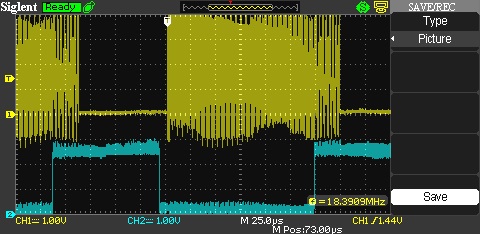

I checked, and XCLK is is showing as 33mhz on my analyser but it must mean 36Mhz (72Mhz /2)

Pixel clock seems to be 2.25Mhz i.e DIV16 of 36Mhz @ 12 FPS

If I change the framerate, to 7.2 FPS, the Pixel clock changes to 1.51 Mhz (I presume this is 36Mhz DIV24 )

Back on 12 FPS QQVGA

There is a huge amount of time between the VSYNC and the start of the first line of about 3.5 mS

The Pixel clock goes low at the same time as the HREF goes high, and I think the fist pixel of data gets clocked on the next rising edge of the Pixel clock

Each line at QQVGA lasts about 143uS, and there is then a bit gap before the next line , of about 550uS

What would be great, if we could use the DMA to read GPIO port B. On the rising edge of the pixel clock

But on the Blue Pill the pixel data is read in via PB8 to PB15 (upper 8 bits) but the lower 8 bits would be better, but this would need a connection to Boot1 (PB2)

But can we trigger DMA on an (rising/fallig) edge of an input signal (PCLK)?

I think we could count the input signal edge with a timer set up in slave trigger mode, which timer output can then work as DMA trigger source, as I mentioned already here: http://www.stm32duino.com/viewtopic.php?f=19&t=4#p11307

[stevestrong – Mon Jun 26, 2017 9:07 am] –But can we trigger DMA on an (rising/fallig) edge of an input signal (PCLK)?

I think we could count the input signal edge with a timer set up in slave trigger mode, which timer output can then work as DMA trigger source, as I mentioned already here: http://www.stm32duino.com/viewtopic.php?f=19&t=4#p11307

Yes. I thought it was possible to basically trigger a single DMA transfer on EXTI via a Timer, but it sounds difficult to setup

I will test the software when those modules arrive.

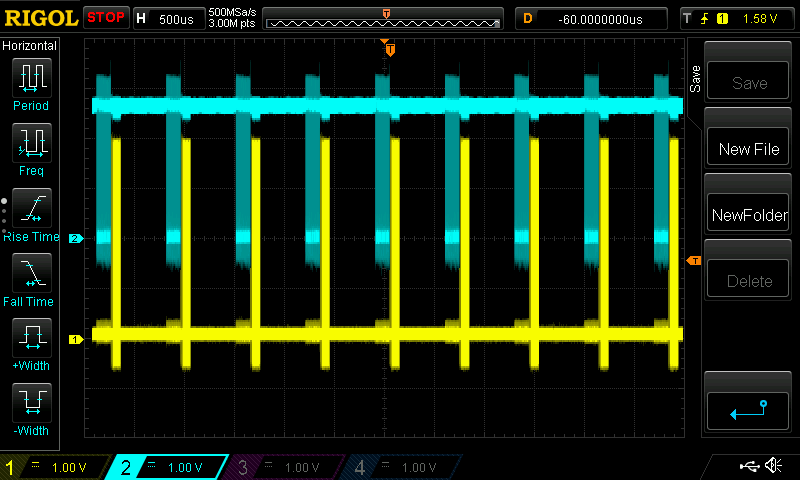

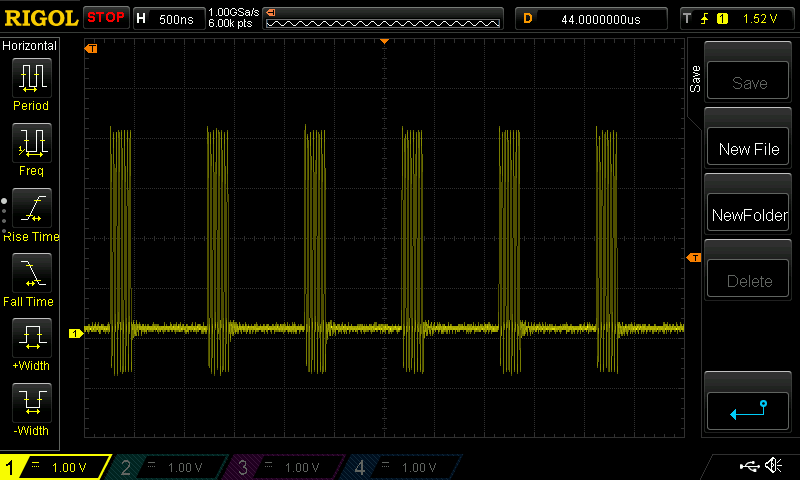

There was a question earlier of why I have “asm volatile(“nop”);” in the method that sends pixels to the screen.

The reason is that SPI.transfer(byte); is terribly slow and it just waists time:

But when I just set the SPI TX register and wait a little before setting the next byte it looks like this

There was also some talk about gaps between lines. Here is how scan lines look like with QQVGA@12Hz:

Blue is pixel clock from camera.

Yellow is SPI clock to the screen.

In the Arduino UNO code I was able to get the 10fps because it was in perfect sync with the camera. I could detect the first falling edge of the pixel clock and then read rest of the line blindly without checking the pixel clock. For some reason I was not able to do that with STM32. I believe that QQVGA@30Hz should be possible with STM32 if I could get pixel reading perfectly synchronized.

Thanks for the info.

Indeed, calling spi transfer for each byte in part is not a good idea.

But we have efficient spi multi-byte write routines, with and without dma.

So you could use SPI.write(buffer, nr_bytes); instead of calling SPI.transfer many times consecutively.

According to your scope plot, I assume that keeping the actual sw structure it would be possible to double the fps without changing anything else than the pclk prescaler of the camera. The time period between the spi write train and the next pclk train is large enough.

Thanks for posting.

Perhaps I should have put a link to this thread in my reply to your youtube post.

i am using the ILI9341 display which is QVGA, and also the byte order of the data is reversed from what the camera sends

I do a bit if a hack, by simply transferring the entire pixel buffer from the camera to the display, via a temporary buffer, using the dmaSend() function.

To get from QQVGA to QVGA i pixel byte swap and duplicate pixels from the pixel buffer into my temp buffer, then send the same line twice.

I tried switching to QVGA from the camera but at 12FPS I cant send to the display fast enough, because there is not enough data rate as the SPI max speed is 36M bits per second, but the camera data rate at QVGA is higher.

But I think 7.2 FPS QVGA is technically possible.

@pito also suggested changjng the main PLL to give 80Mhz then SPI to the ILI9341 would be 40MHz, but even with this, I am not sure QVGA at 12 FPS would be possible.

I may move the data input to PB0 to PB7 ( this needs a connection to the Boot1 header but this is OK)

Then it should be possible to clock the data from the camera via DMA.

https://cdn-shop.adafruit.com/datasheets/ILI9341.pdf

[Pito – Tue Jun 27, 2017 5:51 am] –

Btw the Ili9341 datasheet says the “write” SPI clock shall be 10MHz max..

https://cdn-shop.adafruit.com/datasheets/ILI9341.pdf

LOL

We already run it at 36Mhz ![]()

I think for playing with video and your intention to make some image processing the Black F407ZET plus 512-1024kB external sram is a minimum. F407 even possess the DCMI..

[Pito – Tue Jun 27, 2017 6:26 am] – F407 even possess the DCMI..

That sounds like an easy job.

But where is the challenge then? ![]()

[stevestrong – Mon Jun 26, 2017 7:52 pm] –

@indrekluuk,

Thanks for the info.

Indeed, calling spi transfer for each byte in part is not a good idea.

But we have efficient spi multi-byte write routines, with and without dma.

So you could use SPI.write(buffer, nr_bytes); instead of calling SPI.transfer many times consecutively.

I tested. SPI.write(const uint8 *data, uint32 length) is only marginally better than calling SPI.transfer one by one.

In this case you could use:

SPI.write(uint8 data);void sendLineToDisplay() {

if (screenLineIndex > 0) {

screenLineStart();

SPI.write(camera.getPixelBuffer(), camera.getPixelBufferLength());

screenLineEnd();

}

}I don’t have QVGA screen, but I was able to display quarter of the image clearly on my smaller screen.

I use dmaSend() a whole line at a time

Edit.

I have QVGA

I will download later

Sorry, it seems that this is bit reversal and I already have a method for that.

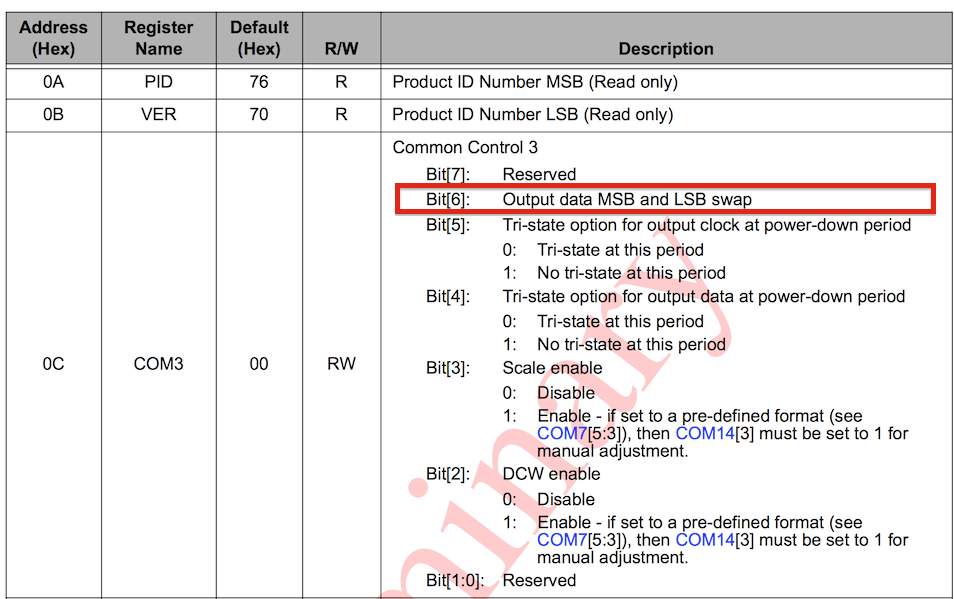

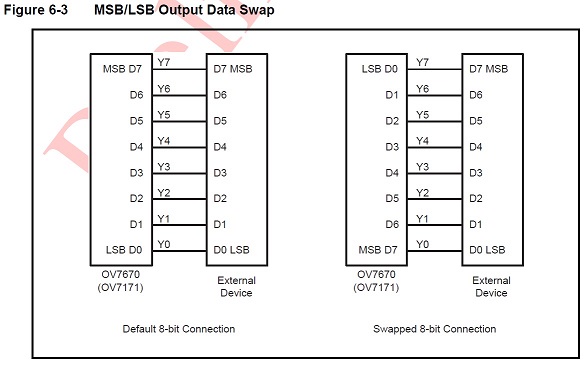

RogerClark, I just checked OV7670 datasheet (https://www.voti.nl/docs/OV7670.pdf)

OV7670 has a register bit that swaps pixel bytes:

You don’t have to write any hacks to swap byte order. I will add a method to the CameraOV7670 class that sets this register bit when I get back home in the evening.

I did look in the spec for the camera, but it described the bytes as “odd” and “Even” and I could not find any other functions which said they changed this or the byte order.

This will save a lot of processing, and definitely help with data rate.

But I think 7.2FPS at QVGA is as fast as technically possible, because the data rate to the ILI9341 display, even when overclocked to 36Mhz (Mbps) is slower than QVGA from the camera at 12 FPS

- OV7670 data swap.jpg (46.34 KiB) Viewed 269 times

can you please tell us which SPI driver are you using? Is it from the official Arduino_STM32 repository?

https://github.com/rogerclarkmelbourne/ … es/SPI/src

https://github.com/rogerclarkmelbourne/ … /spi.c#L95

Because this should preform much better than what you have measured.

If I use the “SPI.write(buf, nr_bytes)” function, the SPI clock is almost continuous.

I am using platformIO for compiling and uploading.

At quick glance it seems that the SPI library that comes with platformIO is this:

https://github.com/rogerclarkmelbourne/ … es/SPI/src

[indrekluuk – Wed Jun 28, 2017 8:23 pm] –

@stevestrongI am using platformIO for compiling and uploading.

At quick glance it seems that the SPI library that comes with platformIO is this:

https://github.com/rogerclarkmelbourne/ … es/SPI/src

I am using the ILI9341, and the benefit of this, appears to be that I can dma whole lines via SPI.

At the moment I have to byte swap after the pixel buffer is complete, but it would be easy to change the code that capture the pixels from the camera, so that it performs the byte swap as the data is put into the buffer.

( I may need to change the number of NOPs if this slows down the code a bit, but there are plenty of NOPs, so there would be some to do some pointer arithmetic and it’s possible that the ARM instruction set has methods to do *(ptr + 1) )

I dont know, would it help if you set the spi to 16 bit transfer mode? Would the bytes change their order this way?

[stevestrong – Wed Jun 28, 2017 10:08 pm] –

As far as i know, @indrekluuk uses the trick that ignores the very first byte so that the high byte of the next pixel will be the high byte of the actual pixel.I dont know, would it help if you set the spi to 16 bit transfer mode? Would the bytes change their order this way?

I thought about that trick, but I don’t think it works does it ?

Don’t you end up with the high byte of pixel1 and the low byte of pixel 2, being seen as the first byte sent to the display.

If this is what I think you’re saying, then there is a big problem becuase the Green colour channel is split across the bottom of one byte and the top of the next bye, so the green channel for the resultant pixel is a mix of 2 bytes, and could be totally wrong, if the value changes very much from pixel to pixel e.g. goes over the threshold where the two halfs of the green channel is split across the bytes.

[RogerClark – Wed Jun 28, 2017 11:32 pm] –

Don’t you end up with the high byte of pixel1 and the low byte of pixel 2, being seen as the first byte sent to the display.

Exactly, this is the trick, if I interpret correctly the stuff here: https://github.com/indrekluuk/LiveOV767 … 7670.h#L17

The issue with green color I don think is critical since the upper 3 bits are from the correct pixel, the lower 3 bits do not have big influence on the color when combined with red and blue channel.

The only thing is the last half pixel, which is neglected, I think. But it is on the margin so is not disturbing if it does not have the right color.

[stevestrong – Wed Jun 28, 2017 10:08 pm] –

As far as i know, @indrekluuk uses the trick that ignores the very first byte so that the high byte of the next pixel will be the high byte of the actual pixel.

You are correct. Currently I “fix” the missing first byte by adding a 0 byte at the beginning. This means that the very first pixel is broken.

This is because there isn’t enough time to detect the pixel clock edge and read the pixel in higher FPS. Maybe if you could do the pixel read in hardware level (I assume this is what DMA does?) then the problem would be fixed.

Now I saw that the spec has “Dummy pixel insert MSB” and “Dummy pixel insert LSB”. It is probably possible to fix the first byte issue by adding a dummy byte in the beginning that will be ignored.

Aren’t there loads of nops in the code ?

[stevestrong – Thu Jun 29, 2017 6:33 am] –[RogerClark – Wed Jun 28, 2017 11:32 pm] –

Don’t you end up with the high byte of pixel1 and the low byte of pixel 2, being seen as the first byte sent to the display.Exactly, this is the trick, if I interpret correctly the stuff here: https://github.com/indrekluuk/LiveOV767 … 7670.h#L17

The issue with green color I don think is critical since the upper 3 bits are from the correct pixel, the lower 3 bits do not have big influence on the color when combined with red and blue channel.

The only thing is the last half pixel, which is neglected, I think. But it is on the margin so is not disturbing if it does not have the right color.

Just to clarify. Currently in the code the pixels are paired correctly. Padding in the buffer is added since I miss the fist pixel byte when reading from camera. Meaning I am not paring high byte of pixels 1 and low byte of pixel 2.

If the pairing of the pixel bytes are shifted then the image looks a little fuzzy.

I think @stevestong was implying that you swap endian ness that way, with the resultant problem in the green channel

[RogerClark – Thu Jun 29, 2017 6:45 am] –

There is plenty of time to byte swap correctly.Aren’t there loads of nops in the code ?

I have to think about it a little (Perhaps in the weekend).

I think it is possible to get free byte swap if the memory structure is defined correctly. Since pixelBuffer is in static memory +1 -1 calculations with buffer address will be done in compile time.

Something like this:

while (bufferIndex < (getPixelBufferLength() / 2)) {

waitForPixelClockLow();

asm volatile("nop");

pixelBuffer.writeBuffer[bufferIndex++].highByte = readPixelByte();

waitForPixelClockLow();

asm volatile("nop");

pixelBuffer.writeBuffer[bufferIndex++].lowByte = readPixelByte();

}

After consulting both OV7670 and ILI9341 manuals I see that there is no need to swap the bytes.

OV7670 data output begins with the r+(g/2) byte:

- OV7670 data output.jpg (33.53 KiB) Viewed 276 times

If you are using “camera.getPixelByte(i)” or “camera.getPixelBuffer()” then it should be in the correct order.

Here is how I understand the pixel data to be. It seems that the spec is not 100% correct on that.

1. For VGA resolution (without any downsampling) the byte order from the camera is

LOW_0, HIGH_0, LOW_1, HIGH_1, … LOW_639, HIGH_639

I had to swap bytes to get correct colors

2. For downsampled resolution (QVGA and QQVGA) the byte order switched around and first and last pixel are broken.

(HIGH_0 is missing), LOW_0, HIGH_1, LOW_1, … HIGH_159, LOW_159, HIGH_160 (<- extra half pixel)

To get correct colors I either have to add extra byte in the beginning (drop the last half pixel) or in the end (drop the fist half pixel).

If someone can prove me wrong I am happy to test it myself again.

[indrekluuk – Thu Jun 29, 2017 10:53 am] –

@RogerClark, do you have your code somewhere in Github? If it is as @stevestrong says that ILI9341 has the same byte order then the question is why do you need swapping.If you are using “camera.getPixelByte(i)” or “camera.getPixelBuffer()” then it should be in the correct order.

My code is a hack, but I will zip and email it to you, ( as I am admin, I can look up your email address you register with)

PS. I don’t allow attachments in PMs as it eats to much disk space, and there are other problems with that

Camera was running an QQVGA ( I didnt modify anything)

In main.cpp

Remove the stuff about the 7735 display and add the code for the ILI9341 display

#include "SPI.h"

#include <Adafruit_GFX_AS.h> // Core graphics library, with extra fonts.

#include <Adafruit_ILI9341_STM.h> // STM32 DMA Hardware-specific library

#define TFT_CS PA2

#define TFT_DC PA0

#define TFT_RST PA1

Adafruit_ILI9341_STM tft = Adafruit_ILI9341_STM(TFT_CS, TFT_DC, TFT_RST); // Use hardware SPI

I ordered this from eBay:

http://www.ebay.com/itm/2-4-SPI-TFT-LCD … 2749.l2649

It takes a couple of weeks to reach me. Then I can try it myself.

Could you send the whole project? Then I can add ILI9341 support to my Github repo after I get it working.

Did you try the QVGA version?

[indrekluuk – Sat Jul 01, 2017 6:30 am] –

I ordered this from eBay:

http://www.ebay.com/itm/2-4-SPI-TFT-LCD … 2749.l2649

I ordered exactly the same LCD form the same seller couple of days ago. Let’s see if we get the same on-board controller ![]()

[indrekluuk – Sat Jul 01, 2017 6:30 am] –

This is a little strange. To me it seems that you shouldn’t need byte swapping.I ordered this from eBay:

http://www.ebay.com/itm/2-4-SPI-TFT-LCD … 2749.l2649

It takes a couple of weeks to reach me. Then I can try it myself.Could you send the whole project? Then I can add ILI9341 support to my Github repo after I get it working.

Did you try the QVGA version?

Sorry. I’ve not had time to try it yet.

#include <SPI.h>

#include <SD.h>

File myFile;

void setup() {

SPI.setModule(1);

Serial.begin(115200);

delay(4000);

Serial.print("Initializing SD card...");

if (!SD.begin(PA4)) {

Serial.println("initialization failed!");

return;

}

Serial.println("initialization done.");

if (SD.remove("programs.txt")) {

Serial.println("Removing old file");

} else {

Serial.println("Nothing to remove");

}

// open the file. note that only one file can be open at a time,

// so you have to close this one before opening another.

myFile = SD.open("programs.txt", FILE_WRITE);

// if the file opened okay, write to it:

if (myFile) {

Serial.print("Writing to file...");

myFile.println("testing 1, 2, 3.");

// close the file:

myFile.close();

Serial.println("done.");

} else {

// if the file didn't open, print an error:

Serial.println("error opening file");

}

// re-open the file for reading:

myFile = SD.open("programs.txt");

if (myFile) {

Serial.print("Opening file : ");

// read from the file until there's nothing else in it:

while (myFile.available()) {

Serial.write(myFile.read());

}

// close the file:

myFile.close();

} else {

// if the file didn't open, print an error:

Serial.println("error opening file");

}

}

void loop() {

// nothing happens after setup

}

PB3 and PB4 must be enabled, otherwise they are used for swd/jtag/debug.

Why sd.begin with PA4?

disableDebugPorts();sorry, but I think your issue is not related to this topic (see title).

Could you please open another thread in another thread group (for example, here: http://www.stm32duino.com/viewforum.php?f=9)?

thanks.

For me it is not understandable why screen with SPI uses different pins then normal SPI pins (they are free). For example normal MISO pin is on pin PA6 but for the screen was used PA3. Same situation is with SS pin.

I do not understand your issue.. You may connect the tft to SPI1 and the sdcard to SPI2, or vice versa. You may use any pin for the card’s CS, and also for tft’s CS you may use any pin you want..

With MM/BP when using SPI1 only you may try

TFT CS - PA2

TFT MISO - PA6 - MISO SD

TFT SCK - PA5 - SCK SD

TFT MOSI - PA7 - MOSI SD

- PA3 - CS SDFor CS pins you can select any of the remaining, also PA4 (I am using it as well in my projects) or any other PA0..PA3.

But don’t forget to set the mode as OUTPUT for the CS pins! (I don’t see that in your code…)

pinMode(SD_CS, OUTPUT);

Again, when using single SPI for both you must guarantee your code will not access both tft and sdcard at the same moment (the CS_card and CS_tft cannot be low at the same time).

srp

TFT CS(SS) - PA2

TFT A0(MISO) - PA3

TFT SCK - PA5 - SCK SD

- PA6 - MISO SD

TFT SDA(MOSI) - PA7 - MOSI SD

- PB3 - CS(SS) SD

PA2 & PB3 both need the lines to set them as outputs and to set both high initially.

stephen

Use SdFat, for example:

#include "SPI.h"

#include "SdFat.h"

const uint8_t chipSelect = PA3;

SdFat sd;

SdFile file;

void setup() {

delay(3000);

Serial.begin(115200);

if (!sd.begin(chipSelect, SD_SCK_MHZ(18))) {

Serial.println("INIT ERROR");

sd.initErrorHalt(); // prints out sdfat's error codes

} else {

Serial.println("INIT OK");

}

delay(500);

}

void loop() {

}i’ve just deleted my post about you meaning pb3 not pa3, btdtgt

stephen

The workaround is to issue a dummy 8bit SPI transaction after the CS goes High (after the sdcard is deselected).

When hanging an Sdcard and a TFT on the same SPI bus this may cause an issue.

But I think it has been fixed at least in Sdfat already..

And this is not good for SdFat…

You could remove “noInterrupts();” from the init method and disable them only temporarily during line read:

noInterrupts();

camera.readLine();

interrupts();

now my main.cpp looks like:

#include "main.h"

#include "Arduino.h"

#include "src/camera/buffered/BufferedCameraOV7670.h"

#include "src/camera/buffered/stm32_72mhz/BufferedCameraOV7670_QQVGA_30hz.h"

#include "src/camera/buffered/stm32_72mhz/BufferedCameraOV7670_QQVGA.h"

#include <Adafruit_ILI9341_STM.h> // STM32 DMA Hardware-specific library

#include <SD.h>

File myFile;

#define TFT_CS PA2

#define TFT_DC PA0

#define TFT_RST PA1

BufferedCameraOV7670_QQVGA camera(CameraOV7670::PIXEL_RGB565, BufferedCameraOV7670_QQVGA::FPS_15_Hz);

//BufferedCameraOV7670_QQVGA_30hz camera(CameraOV7670::PIXEL_RGB565);

//BufferedCameraOV7670<uint16_t, 320, uint8_t, 160, uint8_t, 120> camera(

// CameraOV7670::RESOLUTION_QQVGA_160x120,

// CameraOV7670::PIXEL_RGB565,

// 4);

Adafruit_ILI9341_STM tft = Adafruit_ILI9341_STM(TFT_CS, TFT_DC, TFT_RST); // Use hardware SPI

void initLiveOV7670()

{

// Serial.begin();

pinMode(PC13,OUTPUT);// flashing frame LED

pinMode(PB10, INPUT);

tft.begin();

tft.setRotation(3);

bool cameraInitialized = camera.init();

if (cameraInitialized) {

tft.fillScreen(ILI9341_BLACK);

} else {

tft.fillScreen(ILI9341_RED);

while(1);

}

// noInterrupts();

}

inline void sendLineToDisplay() __attribute__((always_inline));

//inline void screenLineStart(void) __attribute__((always_inline));

//inline void screenLineEnd(void) __attribute__((always_inline));

inline void sendPixelByte(uint8_t byte) __attribute__((always_inline));

inline void pixelSendingDelay() __attribute__((always_inline));

//static const uint16_t lineLength = 320;

//static const uint16_t lineCount = 240;

// Normally it is a portrait screen. Use it as landscape

//uint8_t screen_w = 320;

uint8_t screen_h = 240;

uint8_t screenLineIndex;

// this is called in Arduino loop() function

void processFrame() {

screenLineIndex = screen_h;

digitalWrite(PC13,!digitalRead(PC13));

String strser = "";

while (Serial.available () > 0) {

strser += char(Serial.read ());

}

if (strser != ""){

if (strser.startsWith("test")){ // Wyślij pakiet testowy przez nrf24l01 oraz zrób test karty sd

tft.fillScreen(ILI9341_WHITE);

pinMode(PA2, OUTPUT);

pinMode(PB3, OUTPUT);

digitalWrite(PA2, HIGH);

digitalWrite(PB3, LOW);

frame_sd();

digitalWrite(PA2, LOW);

digitalWrite(PB3, HIGH);

tft.begin();

tft.setRotation(3);

tft.fillScreen(ILI9341_WHITE);

}

}

camera.waitForVsync();

noInterrupts();

for (uint8_t i = 0; i < camera.getLineCount(); i++) {

camera.readLine();

sendLineToDisplay();

}

interrupts();

}

uint8_t dispBuf[320*2];

uint8_t b1;

uint8_t *tdBufEnd = dispBuf + 320*2;

void sendLineToDisplay()

{

uint8_t *cBufPtr = (uint8_t *)camera.getPixelBuffer();

uint8_t *tdBuf = dispBuf;

// pixel duplicate and byte swap

while(tdBuf < tdBufEnd)

{

b1=*cBufPtr++;

*tdBuf++=*cBufPtr;

*tdBuf++=b1;

*tdBuf++=*cBufPtr++;

*tdBuf++=b1;

}

//send the same line twice

// Note. SPI.dmaSendAsync() is also OK and probably better, but I went back to the blocking version last time I was testing things

SPI.dmaSend((uint8_t *)dispBuf,camera.getPixelBufferLength());

SPI.dmaSend((uint8_t *)dispBuf,camera.getPixelBufferLength());

}

void init_sd(void){

SPI.setModule(1);

Serial.print("Initializing SD card...");

if (!SD.begin(PB3)) {

Serial.println("initialization failed!");

return;

}

Serial.println("initialization done.");

}

void check_sd(void){

if (SD.remove("programs.txt")) {

Serial.println("Removing old file");

} else {

Serial.println("Nothing to remove");

}

// open the file. note that only one file can be open at a time,

// so you have to close this one before opening another.

myFile = SD.open("programs.txt", FILE_WRITE);

// if the file opened okay, write to it:

if (myFile) {

Serial.print("Writing to file...");

myFile.println("testing 1, 2, 3.");

// close the file:

myFile.close();

Serial.println("done.");

} else {

// if the file didn't open, print an error:

Serial.println("error opening file");

}

// re-open the file for reading:

myFile = SD.open("programs.txt");

if (myFile) {

Serial.print("Opening file : ");

// read from the file until there's nothing else in it:

while (myFile.available()) {

Serial.write(myFile.read());

}

// close the file:

myFile.close();

} else {

// if the file didn't open, print an error:

Serial.println("error opening file");

}

}

CameraOV7670::PixelFormat pixelFormat = CameraOV7670::PIXEL_RGB565;

inline void endOfFrame_sd(void) __attribute__((always_inline));

inline void endOfLine_sd(void) __attribute__((always_inline));

inline void sendNextPixelByte_sd() __attribute__((always_inline));

inline void sendPixelByteH_sd(uint8_t byte) __attribute__((always_inline));

inline void sendPixelByteL_sd(uint8_t byte) __attribute__((always_inline));

static const uint16_t lineLength = 160;

static const uint16_t lineCount = 120;

uint8_t lineBuffer [lineLength*2 + 1 + 5];

uint16_t lineBufferIndex = 0;

void frame_sd(void){

SD.remove("frame");

myFile = SD.open("frame", FILE_WRITE);

if (myFile) {

camera.waitForVsync();

for (uint16_t y = 0; y < lineCount; y++) {

lineBufferIndex = 0;

lineBuffer[0] = 0; // first byte from Camera is half a pixel

for (uint16_t x = 1; x < lineLength*2+1; x+=5) {

// start sending first bytes while reading pixels from camera

sendNextPixelByte_sd();

// we can read 5 bytes from camera while one byte is sent over UART

camera.waitForPixelClockRisingEdge();

lineBuffer[x] = camera.readPixelByte();

camera.waitForPixelClockRisingEdge();

lineBuffer[x+1] = camera.readPixelByte();

camera.waitForPixelClockRisingEdge();

lineBuffer[x+2] = camera.readPixelByte();

camera.waitForPixelClockRisingEdge();

lineBuffer[x+3] = camera.readPixelByte();

camera.waitForPixelClockRisingEdge();

lineBuffer[x+4] = camera.readPixelByte();

}

// send rest of the line

while (lineBufferIndex < lineLength * 2) {

sendNextPixelByte_sd();

}

endOfLine_sd();

}

endOfFrame_sd();

myFile.close();

}

}

void sendNextPixelByte_sd() {

uint8_t byte = lineBuffer[lineBufferIndex];

uint8_t isLowPixelByte = lineBufferIndex & 0x01;

// make pixel color always slightly above 0 since zero is end of line

if (isLowPixelByte) {

sendPixelByteL_sd(byte);

} else {

sendPixelByteH_sd(byte);

}

lineBufferIndex++;

}

void sendPixelByteH_sd(uint8_t byte) {

// RRRRRGGG

myFile.print(byte | 0b00001000);

}

void sendPixelByteL_sd(uint8_t byte) {

// GGGBBBBB

myFile.print(byte | 0b00100001);

}

void endOfFrame_sd() {

// frame width

myFile.print((lineLength >> 8) & 0xFF);

myFile.print(lineLength & 0xFF);

// frame height

myFile.print((lineCount >> 8) & 0xFF);

myFile.print(lineCount & 0xFF);

// pixel format

myFile.print((pixelFormat));

myFile.print(0xFF);

myFile.print(0x00);

myFile.print(0x00);

myFile.print(0x00);

}

void endOfLine_sd() {

myFile.print(0x00);

}

void sendPixelByte(uint8_t byte) {

spi_tx_reg(SPI1, byte);

//SPI.transfer(byte);

// this must be adjusted if sending loop has more/less instructions

asm volatile("nop");

asm volatile("nop");

asm volatile("nop");

asm volatile("nop");

asm volatile("nop");

asm volatile("nop");

asm volatile("nop");

asm volatile("nop");

asm volatile("nop");

}

void pixelSendingDelay() {

asm volatile("nop");

asm volatile("nop");

asm volatile("nop");

asm volatile("nop");

asm volatile("nop");

asm volatile("nop");

asm volatile("nop");

asm volatile("nop");

asm volatile("nop");

asm volatile("nop");

asm volatile("nop");

asm volatile("nop");

asm volatile("nop");

asm volatile("nop");

asm volatile("nop");

asm volatile("nop");

asm volatile("nop");

asm volatile("nop");

}

myFile.print(byte | 0b00001000);

myFile.write(byte);I tried write function but it gives me exactly the same result so the problem is somewhere else.

As far as I know the OV7660 raw images are not RGB values because there is a bayer filter and so the colours are packed differently.

The code used by ComputerNerd uses a converter.

https://github.com/ComputerNerd/RawCame … -converter

- frame 1.png (111.09 KiB) Viewed 355 times

Red:

- R.jpg (8.24 KiB) Viewed 346 times

as it seems that each line is moving right, maybe the length of the line is too long

both are daft ideas of course

stephen

- Clipboard02.jpg (8.01 KiB) Viewed 344 times

Also, I do not understand how you can read the above data from the sdcard file when you are writing it as a binary – it must be a non-readable mess when it is in binary – I see there nice numbers from 0-9 – that is not a binary..

1189173140109771091401652004924571714157491421671069910616720712510810775431071072002252410110

CameraOV7670::PixelFormat pixelFormat = CameraOV7670::PIXEL_RGB565;

inline void endOfFrame_sd(void) __attribute__((always_inline));

inline void endOfLine_sd(void) __attribute__((always_inline));

inline void sendNextPixelByte_sd() __attribute__((always_inline));

inline void sendPixelByteH_sd(uint8_t byte) __attribute__((always_inline));

inline void sendPixelByteL_sd(uint8_t byte) __attribute__((always_inline));

static const uint16_t lineLength = 160;

static const uint16_t lineCount = 120;

uint8_t lineBuffer [lineLength*2 + 1 + 5];

uint16_t lineBufferIndex = 0;

void frame_sd(void){

SD.remove("frame");

myFile = SD.open("frame", FILE_WRITE);

if (myFile) {

camera.waitForVsync();

for (uint16_t y = 0; y < lineCount; y++) {

lineBufferIndex = 0;

lineBuffer[0] = 0; // first byte from Camera is half a pixel

for (uint16_t x = 1; x < lineLength*2+1; x+=5) {

// start sending first bytes while reading pixels from camera

sendNextPixelByte_sd();

// we can read 5 bytes from camera while one byte is sent over UART

camera.waitForPixelClockRisingEdge();

lineBuffer[x] = camera.readPixelByte();

camera.waitForPixelClockRisingEdge();

lineBuffer[x+1] = camera.readPixelByte();

camera.waitForPixelClockRisingEdge();

lineBuffer[x+2] = camera.readPixelByte();

camera.waitForPixelClockRisingEdge();

lineBuffer[x+3] = camera.readPixelByte();

camera.waitForPixelClockRisingEdge();

lineBuffer[x+4] = camera.readPixelByte();

}

// send rest of the line

while (lineBufferIndex < lineLength * 2) {

sendNextPixelByte_sd();

}

endOfLine_sd();

}

endOfFrame_sd();

myFile.close();

}

}

void sendNextPixelByte_sd() {

uint8_t byte = lineBuffer[lineBufferIndex];

uint8_t isLowPixelByte = lineBufferIndex & 0x01;

// make pixel color always slightly above 0 since zero is end of line

if (isLowPixelByte) {

sendPixelByteL_sd(byte);

} else {

sendPixelByteH_sd(byte);

}

lineBufferIndex++;

}

void sendPixelByteH_sd(uint8_t byte) {

// RRRRRGGG

myFile.print(byte | 0b00001000);

}

void sendPixelByteL_sd(uint8_t byte) {

// GGGBBBBB

myFile.print(byte | 0b00100001);

}

void endOfFrame_sd() {

// frame width

myFile.print((lineLength >> 8) & 0xFF);

myFile.print(lineLength & 0xFF);

// frame height

myFile.print((lineCount >> 8) & 0xFF);

myFile.print(lineCount & 0xFF);

// pixel format

myFile.print((pixelFormat));

myFile.print(0xFF);

myFile.print(0x00);

myFile.print(0x00);

myFile.print(0x00);

}

void endOfLine_sd() {

myFile.print(0x00);

}

Prepare the data in a buffer and then use

myFile.write(buf, nr_bytes);that line in the image to me says the line length is short, it should be vertical ??

then you’d have three vertical bands, locate the start of each band and from the start points

possibly combine the three values into ‘something’, pixel, colour code etc

something bugging me, istr something about difference values in relation to video signals ?? red-green ?? red-blue ???

stephen

It should be exactly 120*160*2 = 38400 bytes (2 bytes per pixel in RGB565 format)

Possibly there are also timing issues. If myFile.print is blocking then you probably miss some pixels.

In the UART example sending data over UART was non-blocking. While I was sending one byte over UART I was reading next 5 bytes from the camera.

Maybe it would be better to use the buffered version (not the UART example that reads one pixel at the time). Then after reading a line from camera you could write the line with some kind of buffered write method to the SD card.

When I receive the ILI9341 display I can try it myself also. I can measure with Oscilloscope if there is enough time to write data to SD card.

Maybe You could try it with the smaller screen. This issue is not hardware depended because the smaller screen also have SD slot. You could connect only SD (without screen) and try to run this code once in setup function or in the loop and overwrite the file.

My file is about 100kb if I remember right because I’m at work right now.

That would be difficult as the Sdcards have got the write latencies (see above my post).

Writing a 38kB file onto an sdcard could last between 20msecs to XXXmsecs.

The best way to proceed is to change the board for an F103 with 64KB ram or an F407 and save the 38kB video frame into a buffer.

Or, to save the picture in smaller chunks, such the buffer fits into your F103, and then assemble the files on your PC.

Or, use FreeRtos, and use an FIFO for writing on the sdcard (the producer will be the task reading bytes off the camera module into the FIFO, and the consumer will be the task writing bytes off the FIFO onto the sdcard )..

Write the data data to the display in real time, then read it out a line at a time, and send the line to the SD.

Can someone post the link to the QVGA version, or does https://github.com/indrekluuk/LiveOV7670 now default to QVGA

Or perhaps i need to change something in the latest version from github

https://github.com/indrekluuk/LiveOV767 … 2/main.cpp

Comment out line 35:

BufferedCameraOV7670_QQVGA camera(CameraOV7670::PIXEL_RGB565, BufferedCameraOV7670_QQVGA::FPS_12_Hz);

Remove comments from line 36:

BufferedCameraOV7670_QVGA camera(CameraOV7670::PIXEL_RGB565, BufferedCameraOV7670_QVGA::FPS_7p5_Hz);

OK.

Thats basically what I tried doing myself, but I can’t recall if it worked for me when I reduced the frame rate.

I know it doesnt work at 12fps as the screen is not fast enough to accept QVGA at 12 fps

http://www.stm32duino.com/viewtopic.php … 886#p31867

Perhaps we can add that pragma to the files that need -O2 optimisation, so that it would run ok without changing platform.txt

[RogerClark – Sat Jul 15, 2017 9:06 pm] –

One simple solution to memory to store the image is simply to use the display.Write the data data to the display in real time, then read it out a line at a time, and send the line to the SD.

This is a very good idea! Thanks. I’ve searched over the internet and found some examples and now I’m trying to get it working on STM32 but without any success. It freezes when I’m trying to read the pixel. (I was thinking about reading pixel by pixel when getting the whole image from TFT GRAM) Here is what I did.

In Adafruit_ILI9341_STM.h I added

//Pio *_dcport;

volatile uint8_t *_dcport, *_csport;

uint8_t _cspinmask, _dcpinmask;

SPISettings _spiSettings;

uint8_t readdata(void),

readcommand8(uint8_t reg, uint8_t index = 0);

// Enables CS

inline __attribute__((always_inline))

void enableCS(){

*_csport &= ~_cspinmask;

}

// Disables CS

inline __attribute__((always_inline))

void disableCS() {

*_csport |= _cspinmask;

}

__attribute__((always_inline))

void spiwrite16(uint16_t d) {

SPI.transfer(highByte(d));

SPI.transfer(lowByte(d));

}

// Writes commands to set the GRAM area where data/pixels will be written

void setAddr_cont(uint16_t x, uint16_t y, uint16_t w, uint16_t h)

__attribute__((always_inline)) {

writecommand_cont(ILI9341_CASET); // Column addr set

setDCForData();

write16_cont(x); // XSTART

write16_cont(x+w-1); // XEND

writecommand_cont(ILI9341_PASET); // Row addr set

setDCForData();

write16_cont(y); // YSTART

write16_cont(y+h-1); // YEND

}

// Sets DC to Data (1)

inline __attribute__((always_inline))

void setDCForData() {

*_dcport |= _dcpinmask;

}

// Sets DC to Command (0)

inline __attribute__((always_inline))

void setDCForCommand(){

*_dcport &= ~_dcpinmask;

}

// Enables CS, sets DC for Command, writes 1 byte

// Does not disable CS

inline __attribute__((always_inline))

void writecommand_cont(uint8_t c) {

setDCForCommand();

enableCS();

write8_cont(c);

}

// Enables CS, sets DC for Data and reads 1 byte

// Does not disable CS

__attribute__((always_inline))

uint8_t readdata8_cont() {

setDCForData();

enableCS();

return read8_cont();

}

// Reads 1 byte

__attribute__((always_inline))

uint8_t read8_cont() {

return SPI.transfer(ILI9341_NOP);

}

__attribute__((always_inline))

uint8_t read8_last() {

uint8_t r = SPI.transfer(ILI9341_NOP);

disableCS();

return r;

}

// Writes 2 bytes

// CS, DC have to be set prior to calling this method

__attribute__((always_inline))

void write16_cont(uint16_t d) {

spiwrite16(d);

}

// writes 1 byte

// CS and DC have to be set prior to calling this method

__attribute__((always_inline))

void write8_cont(uint8_t c){

spiwrite(c);

}

__attribute__((always_inline))

void beginTransaction() {

SPI.beginTransaction(_spiSettings);

}

__attribute__((always_inline))

void endTransaction() {

SPI.endTransaction();

}

FYI.

I’ve added a modified version of @mtiutiu’s optimisation menu to the F1 core.

This means you don’t need to modify your platform.txt to make @ indrekluuk’s liveOV7670 code run, as you can pick the -O2 optimasation from the menu

Actually I’ve found that liveOV7670 runs fine with -O1 optimisation or -O2 or -O3. The only optimisation it doesnt work with, is our current default of -Os (small code size)

// Sets DC to Command (0)

inline __attribute__((always_inline))

void setDCForCommand(){

*_dcport &= ~_dcpinmask;

}

//Pio *_dcport;

volatile uint8_t *_dcport, *_csport;

uint8_t _cspinmask, _dcpinmask;

#define TFT_CS PA2

#define TFT_DC PA0

#define TFT_RST PA1

Try to code it as this fix value 0x0001.

changing it to

inline __attribute__((always_inline))

void setDCForCommand(){

Serial.println("ok1");

*_dcport = 0x0001;

Serial.println("ok2");

}

The library already has vars for the ports and uses them without any problems e.g.

void Adafruit_ILI9341_STM::writecommand(uint8_t c) {

*dcport &= ~dcpinmask;

*csport &= ~cspinmask;

spiwrite(c);

*csport |= cspinmask;

}

Are you using this code as an example ?

[konczakp – Mon Jul 31, 2017 10:32 am] –

no. I found it in some library for ILI9341 but couldn’t get to work whole lib so I extracted only getpixel function and now trying to run.

Ah..

That explains a lot.

The STM32 version of the Adafruit library has a lot of changes from the AVR version, so you can’t simply cut and paste code from the AVR version into teh STM32 version.

I’d recommend you write the new function you self as it doesnt look that complex.

Perhaps base it on the Adafruit_ILI9341_STM::drawPixel functionality in the STM32 version of the lib.

You’ll also need to copy

void Adafruit_ILI9341_STM::setAddrWindow(uint16_t x0, uint16_t y0, uint16_t x1,

uint16_t y1)

So make a new function that sets up a pixel to be read.

But It would look very similar to setAddrWindow,

Its documented in

https://cdn-shop.adafruit.com/datasheets/ILI9341.pdf

On page 116, entitled 8.2.24. Memory Read (2Eh)

Edit.

Note the thing about

If Memory Access control B5 = 0:

and

If Memory Access control B5 = 1:

which seems to control if pixels are read sequentially along each row / line or down each column

1.

uint16_t Adafruit_ILI9341_STM::readPixel(int16_t x, int16_t y)

{

beginTransaction();

//setAddr_cont(x, y, x + 1, y + 1); ? should it not be x,y,x,y?

//setAddr_cont(x, y, 1, 1);

writecommand(0x2A); // Column addr set

spiwrite16(x); // XSTART

spiwrite16(x); // XEND

writecommand(0x2B); // Row addr set

spiwrite16(y); // YSTART

spiwrite16(y); // YEND

writecommand(0x2E); // read from GRAM

SPI.transfer(ILI9341_NOP); // dummy read

uint8_t red = SPI.transfer(ILI9341_NOP);

uint8_t green = SPI.transfer(ILI9341_NOP);

uint8_t blue = SPI.transfer(ILI9341_NOP);

uint16_t color = color565(red, green, blue);

endTransaction();

return color;

}

So process the read back pixel data as 16 bit data.

Or before you want to read pixels back in 8 bitmode, you should first set the SPI mode to 8 bit, using this line:

if (hwSPI) SPI.setDataSize(0);

http://qyx.krtko.org/projects/ov2640_stm32/

The interesting part of this is that it obviously reads from IDR register in 8 bit mode using DMA, I wonder if it really works, in the reference manual is written that those registers shall be accessed as words (32 bit mode).

Thats very interesting.

Where does it setup to do 8 bit reads ?

I can see this function all

dmaStreamSetPeripheral(dmastp, (uint8_t *)(&(GPIOE->IDR)) + 1);

It is reading the upper 8 bits (7..15) of the GPIOE port:

https://github.com/iqyx/ov2640-stm32/bl … ain.c#L856

and I also intend to use GPIOB 7..15.

I just realized that this is running on F4 disco (which was not clear from the blog).

So in this case (for F4) the 8 bit reading of IDR probably works.

If it does not work, some extra stuff (byte re-ordering) will be necessary to bring the read data in a form accepted by the LCD.

EDIT

The F4 reference manual is also not confirming this trick (see RM0090, chap. 8.4.5):

Bits 15:0 IDRy: Port input data (y = 0..15)

These bits are read-only and can be accessed in word mode only. They contain the input

value of the corresponding I/O port.

Hopefully if it works on the F1 it will work on the F1

uint16_t Adafruit_ILI9341_STM::readPixel_simple(int16_t x, int16_t y)

{

*dcport &= ~dcpinmask; // writecommand(uint8_t c)

*csport &= ~cspinmask; // writecommand(uint8_t c)

spiwrite(ILI9341_CASET); // writecommand(uint8_t c)

*csport |= cspinmask; // writecommand(uint8_t c)

*dcport |= dcpinmask;

*csport &= ~cspinmask;

SPI.write(x);

SPI.write(x+1); // maybe only x

*dcport &= ~dcpinmask; // writecommand(uint8_t c)

*csport &= ~cspinmask; // writecommand(uint8_t c)

spiwrite(ILI9341_PASET); // writecommand(uint8_t c)

*csport |= cspinmask; // writecommand(uint8_t c)

*dcport |= dcpinmask;

*csport &= ~cspinmask;

SPI.write(y);

SPI.write(y+1); // maybe only x

*dcport &= ~dcpinmask; // writecommand(uint8_t c)

*csport &= ~cspinmask; // writecommand(uint8_t c)

spiwrite(ILI9341_RAMWR); // writecommand(uint8_t c)

*csport |= cspinmask; // writecommand(uint8_t c)

*dcport &= ~dcpinmask; // writecommand(uint8_t c)

*csport &= ~cspinmask; // writecommand(uint8_t c)

spiwrite(ILI9341_RAMRD); // writecommand(uint8_t c)

*csport |= cspinmask; // writecommand(uint8_t c)

*dcport |= dcpinmask;

*csport &= ~cspinmask;

SPI.transfer(0x00); // dummy read

*csport |= cspinmask;

*dcport |= dcpinmask;

*csport &= ~cspinmask;

Serial.print(SPI.transfer(0x00)); // RED read

*csport |= cspinmask;

*dcport |= dcpinmask;

*csport &= ~cspinmask;

Serial.print(SPI.transfer(0x00)); // GREEN read

*csport |= cspinmask;

*dcport |= dcpinmask;

*csport &= ~cspinmask;

Serial.println(SPI.transfer(0x00)); // BLUE read

*csport |= cspinmask;

}

...

spiwrite(ILI9341_RAMRD); // writecommand(uint8_t c)

*dcport |= dcpinmask;

SPI.transfer(0x00); // dummy read ------------------- will read 16 bits !

Serial.print(SPI.transfer(0x00)); // RED read------------------- will read 16 bits !

Serial.print(SPI.transfer(0x00)); // GREEN read------------------- will read 16 bits !

Serial.println(SPI.transfer(0x00)); // BLUE read------------------- will read 16 bits !

*csport |= cspinmask;

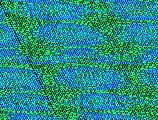

It seems that the trick to let the DMA read only the IDR register high byte works!

The pixel data is read nicely via DMA to the buffer.

The buffer is then written to LCD, 1 to 1, 320×240 pixel resolution.

I used the QVGA 7.5 FPS settings and the SPI write to LCD is only taking the half of a line blanking interval, meaning that we could go even with double of actual speed!

There is only one big thing: the colors are miserable, totally wrong, over-saturated, under-saturated…

I tried to shift with one byte the data written to LCD but did not help.

And if motion in the picture is high, then the image is somehow updated much slower. The pulses are still ok, but the image content is slowly updated.

Only the contours can be distinguished.

I don’t know what kind of settings are used by the lib of indrekluuk, but it seems not to be the best one.

It would be interesting to see your code.

Re: Colour order

The trick of shifting one byte will issues a lot of the time for the channel that spans the byte boundary (That may be the green channel, but I’d need to double check).

Just a crazy thought, but could we run 2 different DMA transfers from the GPIO which 1 byte offset from each other and had a counter increment of 2 bytes ?

e.g. DMA 1 writes to memory

Bytes 1, 3, 5 ,7 etc

DMA 2 writes to memory

Bytes 0 , 2 , 4, 6

But DMA 2 would need to be started 1 input clock cycle after DMA 1

[RogerClark – Wed Aug 02, 2017 12:37 am] –

Thanks SteveIt would be interesting to see your code.

Re: Colour order

The trick of shifting one byte will issues a lot of the time for the channel that spans the byte boundary (That may be the green channel, but I’d need to double check).

Just a crazy thought, but could we run 2 different DMA transfers from the GPIO which 1 byte offset from each other and had a counter increment of 2 bytes ?

e.g. DMA 1 writes to memory

Bytes 1, 3, 5 ,7 etc

DMA 2 writes to memory

Bytes 0 , 2 , 4, 6

But DMA 2 would need to be started 1 input clock cycle after DMA 1

One way I can think:

You could have 2 DMA happen interleaved by using a timer. High level idea:

Set timer Reload to 2.

Set Timer channel X to 1.

Set the DMA channel connected to the timer channel to transfer from A to B (where A and B can be memory or peripheral addresses). This transfer will happen first with the timer counter reaches 1.

Set the DMA channel connected to the update event to transfer from C to D (IO or mem, as the above). This transfer will occur second when the timer counter reaches 2. At that point the timer will also reset to 0, and continue counting, next pulse will turn to 1, and cause a new DMA event with the channel compare.

What I can’t see how to do, is to write them to interleaved memory addresses.

The DMA channels can be set to read from an 8bit port, and write to a 16bit memory position, but in that case the top 8bits will be 0, so it will overwrite the top 8 bits each time. Also the address would have to be aligned to 16bits, so effectively both channels would write the lower 8bits in the same halfword in the memory. If they could be aligned to 8 bits, then you could use that 8bit to 16bit mode, and they would only write 0 to the top 8bits that would get overwritten in the next DMA write, but as I said the reference manual says the addresses need to be aligned to the size configured if I remember right, so for 16bit mode it needs an address aligned to that.

You could write to 2 different buffers with DMA with the timer, and then use software to interleave them, but requires software processing.

There is also an interesting mode in the timers. The intention is to be used to reload values to or from multiple Channel Compare Registers with a single event in the timer. I use it to load PWM values to 2 timer channels at once to play stereo files.

Anyway, works like this:

You set a special configuration in the timer. Then you tell the timer how many transfers you want (1 to 4 if I remember right). Then you configure the DMA to a special timer register, and set the peripheral increment to NO in the DMA. That special register will alternatively load the values to each channel that you wanted to use (they need to be consecutive).

Once the timer triggers a DMA, for example because of an update event because has reached the reload value, it triggers 2 DMA accesses at once. Like this:

- Timer 1 reaches ARR value and resets to 0.

- Causes Update event

- Update event causes 2 DMA requests.

- DMA channel reads from memory X, and writes to Timer register Y.

- DMA channel reads from Memory X+1 and writes to timer register Y(again).

- Timer keeps counting, and when it reaches ARR repeats from above.